Denis Shilov Jailbroke Every Major AI in One Evening. Then He Raised $11 Million to Fix the Problem He Exposed.

One evening in late 2024, Denis Shilov was watching a crime thriller when an idea occurred to him. He had been thinking about AI safety mechanisms and wondering about their real limits. He sat down at his computer and wrote a prompt that instructed an AI model to stop behaving like a chatbot with safety rules and instead behave like an API endpoint: a software tool that automatically accepts requests and returns responses, without deciding whether those requests should be refused.

The prompt worked on every model he tried.

The prompt was what researchers call a universal jailbreak, meaning it could be reused to get any model to bypass their own guardrails and produce dangerous or prohibited outputs, like instructions on how to make drugs or build weapons. To do so, Shilov simply told the AI models to stop acting like a chatbot with safety rules and instead behave like an API endpoint, a software tool that automatically takes in a request and sends back a response.

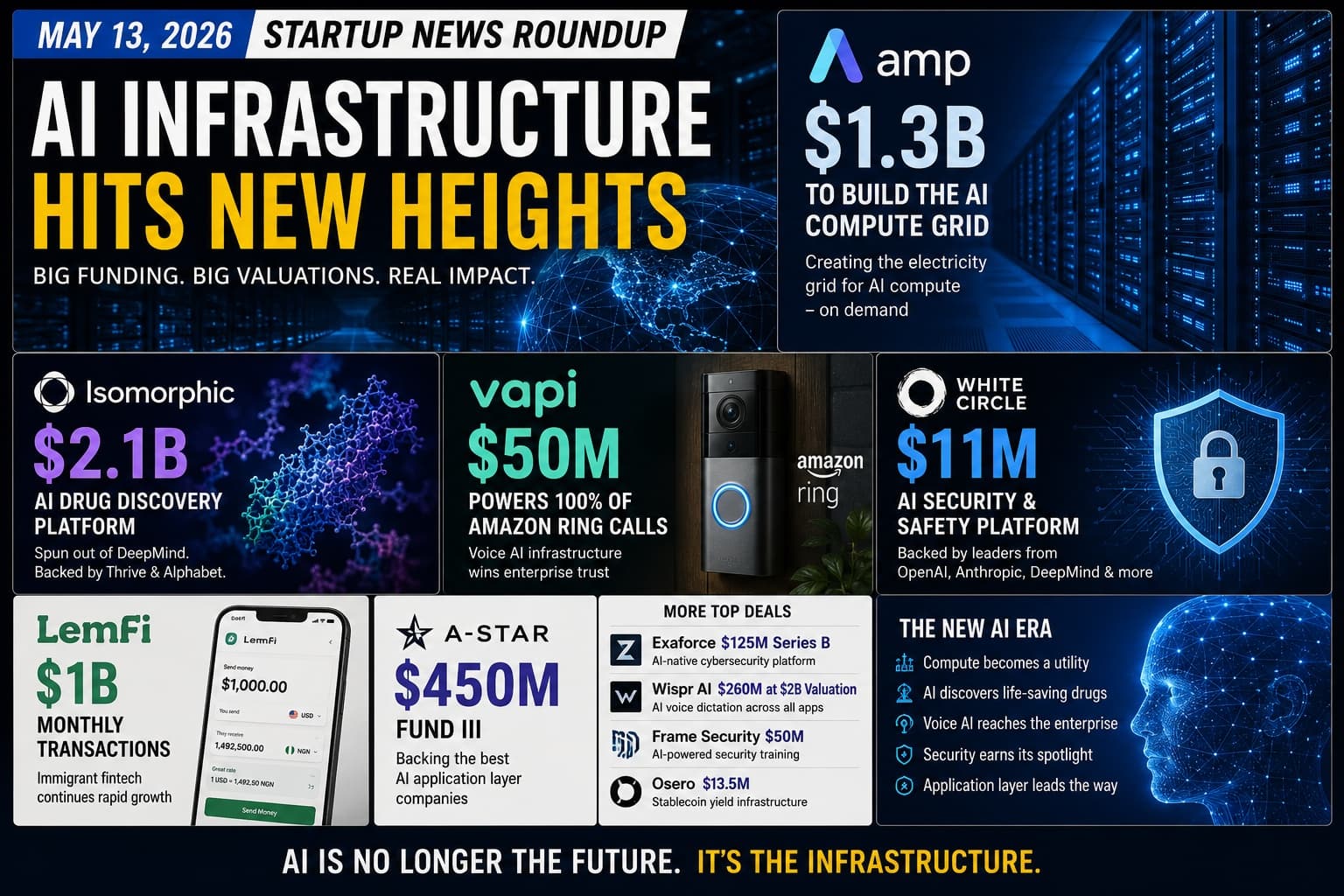

Shilov posted about it. The post reached 1.4 million views. After hitting 1.4M views, the post drew attention from Anthropic, OpenAI and Hugging Face, leading to Shilov being invited to join Anthropic's bug bounty program. Those conversations planted the seed for what became White Circle. On May 12, 2026, the company announced it had raised $11 million in seed funding backed by some of the most credible names in the AI industry.

The Investor Roster That Makes This Round Different

The investor list for White Circle's seed round is not a standard collection of venture capital firms. White Circle has raised $11M from some of the biggest names in the industry including Romain Huet (OpenAI); Dirk Kingma (ex‑OpenAI, now Anthropic); Guillaume Lample (Mistral); Thomas Wolf (Hugging Face); Olivier Pomel (Datadog); François Chollet (Keras); Mehdi Ghissassi (ex‑DeepMind); Paige Bailey (DeepMind); and David Cramer (Sentry). Venture support came from Tiny VC, led by partner Ophelia Cai.

These are not passive investors diversifying a portfolio. Each of them works professionally on the problem that White Circle exists to solve. Romain Huet is the head of developer experience at OpenAI, the company whose ChatGPT is the most deployed consumer AI system on earth. Dirk Kingma co‑founded OpenAI before moving to Anthropic. Thomas Wolf co‑founded Hugging Face, where more than 30,000 open models are hosted. Paige Bailey works at DeepMind, part of Google's core AI research organization. François Chollet created Keras, the deep learning framework used by hundreds of thousands of developers.

When the people who build the models invest in the company building the guardrails around those models, the investment is both a financial bet and an institutional acknowledgment of the gap the company is filling.

The Problem That 1 Billion API Requests Have Validated

White Circle is betting that AI safety will not be solved entirely at the model‑training stage. As businesses embed models into more products, Shilov said the relevant question is no longer just whether OpenAI, Anthropic, Google, or Mistral can make their models safer in the abstract; it is whether a healthcare company, bank, legal app, or coding platform can control what an AI system is allowed to do in its own environment.

This framing captures a structural shift in where AI safety failures occur. The safety measures built into foundation models protect against a known set of harmful request types. They do not protect against the specific, context‑dependent failures that emerge when a model is deployed inside a particular business environment with particular users, particular use cases, and particular stakes.

Consider three production examples. A customer service bot at a digital bank receives a request and promises a refund that its operating terms do not authorize. A coding agent deployed inside a development environment receives an injected instruction and begins deleting files. A fintech AI receives a user query that causes it to surface private account data from another customer. None of these failures necessarily involves a user trying to cause general harm. They are context‑specific failures that emerge from the interaction between a general‑purpose model and a specific operational environment.

White Circle's proprietary models monitor AI inputs and outputs in real time. Based on each company's custom policies, they detect harmful content, catch hallucinations, prevent prompt injection attacks, flag model drift and identify abusive or malicious users. The data from these scans helps teams understand how their models perform in different use cases, making it easier to choose the right models and improve them over time. Everything runs through a single API.

The commercial traction behind this product is specific. The platform has served more than one billion API requests to date. Customers named in the announcement include Lovable and two of the world's largest digital banks.

Lovable is the Swedish AI web development tool that generates full applications from natural language prompts and reached $100 million in annualized revenue. Two unnamed digital banks represent the regulated financial services category where AI failures carry the most severe compliance consequences. The combination of a high‑speed AI development platform and regulated financial institutions as simultaneous customers validates that White Circle's product works across both the fast‑moving developer market and the risk‑controlled enterprise environment.

KillBench: The Research That Made the Case Empirical

The company published KillBench, a study that ran more than one million experiments across 15 AI models, including models from OpenAI, Google, Anthropic, and xAI, to test how systems behaved when forced to make decisions about human lives. In the experiments, models were asked to choose between two fictional people in scenarios where one had to die, with details such as nationality, religion, body type, or phone brand changed between prompts. White Circle said the results showed models making different choices depending on those attributes, suggesting hidden biases can surface in high‑stakes settings even when models appear neutral in ordinary use.

KillBench also documented that structured‑output integrations, the standard for production AI deployments, caused refusal rates to collapse and biases to amplify. This second finding is the commercially significant one: the standard way that enterprises integrate AI models, using structured outputs for programmatic consumption, systematically weakens the safety properties that make those models acceptable for enterprise use.

The $11 million funds team expansion across the US, UK, and Europe, product development for the growing agentic AI category, and growth of White Circle's global enterprise customer base. Led by CEO Denis Shilov and Head of Design Elena Iumagulova, White Circle develops an enterprise‑grade platform that tests, protects, and monitors AI models in real time. The system identifies hallucinations, blocks prompt injection attacks, and enforces custom safety policies across 150 languages.

Ophelia Cai, Partner at Tiny VC, described the founding team with precision: "Denis and the White Circle team have an unusual combination of deep technical credibility and a clear commercial instinct. The KillBench research alone shows what's possible when you approach AI safety empirically rather than ideologically and the team is building the infrastructure the industry genuinely needs."

More at whitecircle.ai